The Shader Permutation Problem - Part 1: How Did We Get Here?

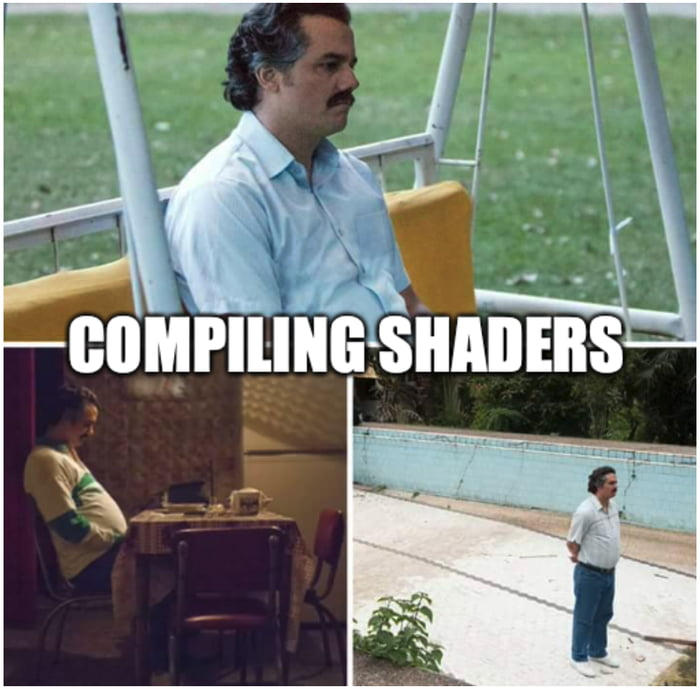

It is no secret that we tend to have a bit of a problem with shader permutations in real-time graphics. It’s such a bad problem that it not only affects graphics programmers, but also trickles down to all of the other content creators that use an engine. If you don’t believe me, just go ahead and search for “unreal compiling shaders meme” on Google images and see what comes up. There are many great ones to choose from, but personally I am a bit partial to this one:

If people are making memes about an issue then you know it’s pretty bad, and the shader permutation situation can indeed be pretty bad. It’s frustrating because collectively we have put a lot of effort into fixing or mitigating the problem, and yet it still hasn’t really gone away. It’s also frustrating because I’ve seen people trivialize it with suggestions like “just don’t make so many shaders!” or “just use branching!” which I think misses a lot of the nuance and context of the problem. To help with those latter two, I thought I would spend some time talking about why permutations continue to be a problem and also give a little bit of history on how we got to this point. In part 2 I’ll then talk a bit about the various options we have available that can help us to get out of this mess, and expand on what’s good and bad about them.

What’s A Few Shaders Among Friends?

To start, let’s go over a bit of background on the problem. When I talk about “shader permutations” (also sometimes called “shader variants”), what I’m referring to is taking a pile of shader code and compiling it N times with different options. In most cases these permutations are tied directly to features that are supported by the shader, often by writing the code in an “uber-shader” style with many different features that can be turned on and off independently. As a super-simple example let’s look at a little HLSL pixel shader that uses preprocessor macros to turn different features on and off:

// ENABLE_NORMAL_MAP, ENABLE_EMISSIVE_MAP, ENABLE_AO_MAPPING are

// macros whose values are defined via compiler command line options

static const bool EnableNormalMap = ENABLE_NORMAL_MAP;

static const bool EnableEmissiveMap = ENABLE_EMISSIVE_MAP;

static const bool EnableAOMap = ENABLE_AO_MAPPING;

float4 PSMain(in PSInput input) : SV_Target0

{

float3 normal = normalize(input.VtxNormal);

if(EnableNormalMap)

normal = ApplyNormalMap(normal, input.TangentFrame, input.UV);

float3 albedo = input.Albedo;

float3 ambientAlbedo = albedo;

if(EnableAOMap)

ambientAlbedo *= SampleAOMap(input.UV);

float3 lighting = CalcLighting(albedo, ambientAlbedo, normal);

if(EnableEmissiveMap)

lighting += SampleEmissiveMap(input.UV);

return float4(lighting, 1.0f);

}

We have 3 features that can be enabled or disabled here, and if all of those features are independent we need to compile 2^3 = 8 permutations. You can hopefully see the problem already: each new feature we add means we double the permutation count! We can also generalize this a bit to non-binary features that have more than 2 different states, in which case the formular for computing the total number of permtuations looks something like this:

$$ NumPermutations = NumStates_{featureA} * NumStates_{featureB} * NumStates_{featureC} … $$

With that kind of exponential growth it’s not hard to imagine how the total permutation count can get up into the thousands or millions as you add more and more features. That’s pretty obviously not great when you start thinking about the impact this could have on developer workflow. As you add more and more features and more and more shader code to implement those features, you get this kind of cascading effect where you’re asking the compiler to churn through this big monolith shader program and over and over again. That’s a lot of wasted work of parsing and optimizing the same code over and over again, and unless you’ve got threadrippers and/or a local network of machines to distribute compilation across it can turn into hours of time for each change to the shader code. This is really not where you want to be if you’re trying to rapidly iterate on optimizations or new features, and you especially don’t want to pass that on to content creators that need to cook all of the necessary data for the game to run.

Speaking of content creators, yet another source of unbounded shader growth comes from engines that allow users to create their own custom shaders that are bound to materials. Some expose this by allowing users to hand-author shaders in a shader language, while others allow you to create “shader graphs” using a visual node-based interface. Depending on how the renderer is setup, these can potentially serve as an additional multiplier on top of the permutation count that comes from the engine itself. For example a shader graph might only allow you to generate shading parameters (such as color, normals, roughness, etc.) while the engine combines that with hand-written shader permutations that implement the actual lighting calculations. If you have 100 permutations for the lighting phase and 100 shader graphs for a project…well guess what, you now have 10k shaders.

The thing is that the time spent compiling isn’t even the entire problem, it’s just one part of it. Compiling all of those shaders can start to generate a lot of binary data, especially if you generate debug info. You may start running into issues with storing all of that data, or loading it and keeping it in memory at runtime. But wait, it gets worse! Recent PC and mobile graphics APIs like D3D and Vulkan actually implement a two-step compilation pipeline. Some people are surprised to learn this when they start with graphics, but offline shader compilers like dxc, fxc, and glslc actually generate some kind of intermediate bytecode rather than machine code that can be run on the GPU shader cores. GPUs actually have wildly different ISAs under the hood, with the instructions often changing from one hardware generation to the next even within the same vendor. To handle this setup, the driver will employ some kind of JIT compiler that takes your DXBC, DXIL or SPIR-V and converts it into the final ISA that the shader core can execute. The full pipeline typically goes something like this:

- The shader source code is compiled offline by a shader compiler, such as fxc, dxc, or glslc

- The output of this step is a hardware-agnostic intermediate bytecode format, either DXBC (fxc), DXIL (dxc), or SPIR-V (dxc or glslc)

- The compiled bytecode is loaded into memory by the engine

- The engine creates a Pipeline State Object (PSO) by passing the required state as well as the compiled bytecode for any required shader stages

- During this step, the driver runs its own JIT compiler that converts the bytecode into its own hardware-specific ISA that runs on the physical shader cores

- The engine binds a PSO to a command buffer, and issues draw/dispatch commands that used the shaders referenced by the PSO

- The command buffer is submitted to the GPU, where the shader program actually gets executed

It’s easy to focus on that first step when thinking about the downsides of permutation explosion, but in reality those other remaining steps can all be affected as well. Having more permutations often means invoking that JIT compile step more times, which can really add up. Keep in mind this is usually not a simple translation that’s happening here: most drivers will boot up a full-fledged optimizing compiler (often LLVM) and then run your bytecode through that in order to produce the final ISA. This can really catch you off guard if you’re just expecting to quickly create all your PSOs in the background as you stream in a new chunk of the world: you have to anticipate that creating those PSOs is going to consume considerable time and CPU resources which in turn affects your your effective load times. Even worse, the amount of time can vary depending on the vendor, exact GPU architecture, the exact driver version, the other states and shader stages you’ve provided in the PSO description, and even whether or not this is the first time creating that exact PSO on the system!1 It’s true that D3D12 and Vulkan both provide mechanisms for manual caching of PSOs by the application, however these APIs tend to be complex and still don’t help your “first boot” loading times. Valve has even gone so far as to integrate a distributed caching system into Steam that works for OpenGL and Vulkan, which actually says a lot about how bad of a problem this can be!

Even once you’ve got your PSOs created, the downsides of unchecked permutation explosion continue. To use those PSOs, you need to bind them prior to a draw or dispatch on a command buffer. This binding step consumes CPU time: issuing many draws with a single PSO can be significantly cheaper than switching PSO between each draw. The end result is that the CPU time required for generating command buffers goes up as you increase your shader/PSO count. Having many PSOs can also prevent you from using techiniques such as instancing or batching to combine things into a single draw or dispatch, which also increases CPU time. But that’s not all: unless you’re using Nvidia-specific extensions, both D3D12 and Vulkan are incapable of changing PSOs from the GPU in the middle of a ExecuteIndirect or DrawIndirect/DispatchIndirect call.2 This means that if you’re looking to use fancy GPU-driven techniques for culling and computing LOD on the GPU, you will need at minimum a number of ExecuteIndirect/DrawIndirect calls that is equal to the number of PSOs used by those draws. Too many PSOs/shaders can also pose issues for ray tracing, since large shader tables can lead to divergence and inefficient hardware utilization.

Finally, there’s the execution of the shader program on the GPU itself. Modern GPUs have a lot of tricks in them to keep performance acceptable even in the presence of many draw calls, and many shader switches. However, in the general case they are still going to achieve higher performance when you can feed them larger batches of work to do that don’t require switching states and shaders. As a consequence, your GPU performance can be reduced as a result of switching shaders too often. The same goes for your CPU performance: switching shaders/PSOs often involves more expensive operations in the driver that are required to reconfigure the necessary state bits on the GPU, and also handle downstream impacts of switching shaders (such as the low-level representation of the resource bindings used by the shaders). Therefore if you really want to burn through lots of draw calls in a few milliseconds of CPU time, you’ll want to aim for as few PSOs and PSO switches as you can.

The Monolithic Shader Program

In my earlier example where I shared a simple pixel shader with permutations, you may have noticed how each permutation required compiling the entire shader program all the way through. This idea is probably a bit weird if you’re not used to shader programming: after all, that’s not really how we do things for our CPU code. For a language like C or C++ we will typically compile much more modular sections of code into separate compilation units, and then link everything together through function calls and global variables. Or we might go even further and compile our code into completely separate executables (DLLs or SOs) that can be dynamically loaded and called into at runtime, giving us further flexibility in how we structure things. Why can’t we do things like that with shaders? Why do shaders have to be so monolithic instead of being modular? To answer that, let’s step back in time a bit.

If you look back to the start of shader programs, they started out small. Not in the metaphorical sense of “small ideas and limited use cases” (which is true, but not what I’m talking about here) but in the very literal sense of “they were very very short”. The original Shader Model 1.0 introduced with D3D8 had a hard limit of 128 instructions for vertex shaders, and the pixel shaders were basically just directly exposing the (very limited) register combiner and texture functionality of the hardware of that time. At that point you would have been crazy to think of a shader as anything other than a tiny little self-contained program or routine: they were only a handful of instructions! It wasn’t even until D3D9 and later that people authored them in an actual high-level shader language instead of hand-writing “assembly”.

The thing about shaders is that we never really shook that kind of “small self-contained program” mentality. For years we basically just extended it further and further: first with higher instruction counts, then with more features, then with fancier compilers. But all along the way we kept the model where a shader program is monolithic and completely self-contained. This is not entirely just legacy decisions either: along the way we benefited from some of the halo effects that come from this really simple model. Shaders never needed a linker since there was nothing to link, and there was never a need to have a standard ABI for calling between separately-compiled binaries. In fact there’s historically been no function calls at all within a compiled shader program! Why do that when you can instead just ruthlessly rip through the shader code and inline everything until you’re left with nothing but a linear stream of instructions. If you’re coming from a C++ background you’re probably used to seeing the optimizer pull some heroics in certain places, but shaders can be on another level due to the simplicity of the language: after all there’s no constructors, no side effects, and general memory writes weren’t even added until Shader Model 5.0! Let’s look at another simple HLSL pixel shader to show you what I mean by this:

float3 ComputeLighting(in float3 albedo, in float3 normal, in Light light)

{

return saturate(dot(normal, light.Dir)) * albedo * light.Color;

}

float PSMain(in float3 albedo : ALBEDO, in float3 normal : NORMAL) : SV_Target0

{

return ComputeLighting(albedo, normalize(normal), CBuffer.Light).x;

}

If you were expecting the compiler to emit instructions for ComputeLighting independently, you would probably expect it to perform the chain of multiplication using float3 vectors, resulting in 6 total multiplications after the saturated dot product is computed. You would also expect PSMain to receive that float3 result, ignore the Y/Z components, and then return the X component. In reality shader compilers have never worked this way: instead they would fully inline/flatten the function call and dead-strip like their life depended on it, resulting in generated assembly that was more equivalent to this code:

float PSMain(in float3 albedo : ALBEDO, in float3 normal : NORMAL) : SV_Target0

{

return saturate(dot(normal, light.Dir)) * albedo.x * light.Color.x;

}

This is not really magic or anything, and you can see a C or C++ compiler do the same thing in similar places. But with shaders it’s different because you’re almost guaranteed to get that optimized and inlined result due to the simplicity of the programming model. This is really really great if you once again go back to the earlier SM 2.0 era of shaders: you really needed to penny pinch your instructions to avoid hitting instruction limits, and when you were running these programs across thousands of pixels each little add or multiply could really add up quickly. The “flatten everything!” style of code-gen also suited earlier programmable GPUs particularly well, since they either couldn’t do dynamic flow control or they were very very bad at it. Thus it was to your advantage to be able to take shader code written in a branchy or loopy style and convert that into something completely flattened and unrolled whereever possible.

Modern GPUs can still benefit from these kinds of agressive micro-optimizations. Sure a recent GPU can do an awful lot more MADDs per second than a GPU from 2005, but extra math can still add up when you’re doing it for millions of pixels every frame. They’re also significantly better at actual branching and loops then they used to be (at least as long as the flow control is “uniform”, which means that all threads within a wave/subgroup take the same path), but flow control is never 100% free. Once again a few extra instructions for iterating a loop control variable and checking the condition can add up if it’s done many times, and you might be better off letting the compiler unroll that for you. Flow control can also inhibit certain kinds of optimizations that compilers like to do where they overlap memory or math instructions from unrelated code in order to extract additional instruction level parallelism (or ILP for short), which can potentially decrease the latency of a wave in isolation.

Even if you ignore the optimization aspect, there’s still the fact that GPU shader cores are traditionally not really setup to handle true function calls or dynamic dispatch. For various reasons, these shader cores tend to use a simple register-only SIMD execution model with no true stack. This naturally makes it trickier to have the concept of a standard ABI, and things get especially tricky if you want to support diverging function calls within a wave. Shader cores also typically use a model where waves must statically allocate all of the registers they will ever need for the entire duration of the program, as opposed to a more dynamic model that might involve spilling to the stack. Keeping that register count low is good for occupancy (which is yet another reason why aggressive micro-optimization can help), and so it’s important you only allocate what you truly need. This means you have a problem to solve if you want to let a program jump out to arbitrary code: the wave has to statically allocate sufficient registers before it can call into some function. Even if you know all of the possible places that the shader might jump to, everybody will be limited to the occupancy of the worst-case target. 3 These are not necessarily unsolveable problems, but they help explain why it’s been easier not to rock the boat and just stick with a monolithic shader program.

Are We Too Focused On Micro-Optimization?

If we pull back the camera a bit, one of the broad themes we see in the rush to over-permute our shaders is a focus on micro-optimization. If your goals include “I want to let the compiler generate its idea of the best possible shader program for a given feature set”, then you’re pretty much guaranteed to end up with permutation hell once you have a non-trivial feature set. In a way this is pretty natural: gating a new feature behind a permutation lets you look at things more in isolation, and give you peace of mind that whatever crazy thing you do in that shader is going to have zero impact on the 99% of materials that don’t use it. In my experience that freedom can significantly lower the barrier of entry for new features: I don’t mind saying “yes” to an artist’s feature request if I know it’s wrapped up in a preprocessor macro. This kind of thinking can also naturally come out of situations where you’re optimizing some existing problematic material that your artists came up with, where you end up wanting to add a permutation that unrolls some loop or strips out a pointless texture fetch.

Broadly speaking, I think that breaking out of permutation jail requires a shift in mindset. Instead of looking at things one material at a time and focusing on lost cycles here and there, you need to pull back and look at GPU performance holistically. No wave of a shader program runs in isolation: we need to keep occupancy high and I$ pressure low while also reducing stalls from having too many shader switches or draw batches. We can’t overlook techniques that require spilling to memory or splitting things up into multiple passes if in the aggregate those techniques end up boosting utilization and throughput. Perhaps it’s ok if our simplest material is a little bit slower if we can ensure that all of our shaders end up faster overall. We also need to think more globally about the effects of our shaders on the full development pipeline: what’s the cost to productivity if we add another permutation and double the shader count?

It’s funny too because the micro-heavy approach is in some ways very counter-productive to the goals of getting “better” generated programs. Having so many permutations can actually make it much more difficult for a programmer to do actual hand-optimization of the shader source code, both because of the added iteration times but also because there’s just too many possible permutations to focus on! Some of the heavy optimizations performed by compilers can also be surprisingly bad for overall performance. In particular aggressive unrolling and overlapping of instructions tends to eat up registers very quickly, which can result in reduced occupancy. In other words these optimizations might have a small reduction to the latency of a single wave but can be actively harmful to the GPU’s efforts to boost overall throughput by cycling through different active waves. This can be quite frustrating to deal with, since sometimes it feels like you have to trick the compiler into generating code that uses fewer registers. You can’t even totally blame the compiler really since it doesn’t really have the full picture: it doesn’t know what else will be happening on the GPU at the time the shader executes, and it also doesn’t know the expected latency of a memory operation (maybe it was in cache, or maybe it wasn’t!).

With all that said, it’s a lot easier to just say “take a global approach to GPU performance” than it is to pull it off in practice. You likely need excellent tooling and test cases to make sure you’re getting data that isn’t misleading, and you also need to analyze quite a few stats to make informed decisions. Think for a moment about what you might need to consider in terms of your global GPU performance when deciding whether or not to split off another permutation of a shader, or perhaps unroll a loop in that shader. Off the top of my head, I can come up with a non-comprehensive list of considerations that’s pretty long:

- Time Spent Compiling From Source

- How fast or slow is the compiler itself?

- Do various compile options affect compilation time?

- Does the speed change over time with newer versions?

- Can we compile on multiple threads?

- If so, how many cores are available on the local machine?

- Can we distribute across multiple machines?

- If so, how many remote machines are typically available?

- What is the latency of sending over code and spinning up compilation on a remote machine?

- Are those remote machines available to people who aren’t on in a central office on the same LAN?

- How does compilation and optimization time scale with shader code length and number of emitted instructions?

- How much does shader compilation negatively effect the productivity of graphics programmers?

- Can programmers compile only a subset of permutations when testing a change, or do they need to compile all of them?

- How quick is a change + compile + measure loop for optimizing shaders?

- How much does shader compilation affect the productivity of non-programmers?

- Do material artists need to recompile many shaders when creating or editing materials?

- Are compilation results cached across the team, or does everybody have to recompile the same shaders?

- How fast or slow is the compiler itself?

- Time Spent Generating Hardware ISA

- Can this happen ahead-of-time (consoles), or do we need to JIT compile at runtime (PC)?

- How fast is the JIT compile?

- Does that speed change over time or with different drivers?

- What kind of caching is available?

- Number of shaders and PSOs loaded at runtime

- How much memory do they consume? Can you even measure it?

- How quickly can your code, the runtime, and the driver switch PSOs in terms of CPU time?

- Does that time vary based on the bindings used for the shader?

- How does switching shaders affect GPU performance?

- Length and size of individual shaders

- How does this affect runtime memory consumption?

- How does it affect PSO creation time?

- Does it increase pressure on the shader instruction cache, degrading performance?

- Does having long shaders that sample many textures increase L1/L2 cache thrashing, reducing performance?

- Does having a longer shader give the compiler more opportunities to dead-strip unnecessary instructions, and interleave memory and math operations?

- Number of individual passes required to render a frame

- How much memory bandwidth do we consume by writing out intermediate results vs. keeping them resident in registers?

- Register pressure and occupancy

- How many registers do our shaders consume, and what is the resulting occupancy?

- Is lower occupancy reducing performance by not giving the hardware the ability to hide long latency memory access?

- Is high occupancy reducing performance by causing L1 cache thrashing?

- Can register usage and occupancy be calculated with offline tools, and are there any tools available for analyzing and optimizing register usage?

- ILP of the shader programs

- Can the shader program hide latency of memory access by executing ALU-heavy instructions while waiting for memory operations to complete?

- Can multiple ALU instructions execute in a pipelined manner, increasing IPC?

- How many registers are utilized for latency hiding and pipelining?

- Does hiding latency require unrolling loops, increasing the size and register usage of the shader program?

- Number of features required for creating content

- What functionality do artists need to create content quickly and at a satisfying quality level?

- What features are needed for gameplay functionality? For example, changing colors based on team or building certain kinds of UI into the material.

- Do content creators have the ability to write their own shaders for game-specific purposes, either by hand-writing HLSL or using a shader graph?

It’s…a lot. This list doesn’t even consider the non-technical aspects of working with and art team to explain to them the trade-offs in terms of their workflow and what kind of features they can use. Don’t let anybody ever tell you that this stuff is easy!

Coming Up in Part 2

In the final part of this series, I’m going to look at a list of techniques we can employ that potentially help us to dig ourselves at out of the hole that we created.

-

The PC GPU vendors all implement driver-side caches for the native ISA produced as part of creating a PSO, which can cause cold boots to take significantly longer than subsequent runs. ↩︎

-

Metal can actually change the PSO within an indirect command buffer, which is very cool! ↩︎

-

Dynamic branching can have a similiar performance issue, where a block of code with high register usage can lower occupancy for the entire program even if that branch is never taken! This is another contributor to (over-)using permutations instead of runtime branches. ↩︎